The transformation of text to speech technology has been nothing short of revolutionary. What once produced mechanical, robotic voices that were barely comprehensible has evolved into sophisticated AI-powered systems that deliver remarkably human-like speech. Today’s ai text to speech solutions can capture emotional nuances, adjust speaking styles, and even clone specific voices with stunning accuracy. This technological leap has opened doors for countless applications, from making digital content accessible to individuals with visual impairments to enabling content creators to produce professional voiceovers without expensive studio equipment.

Modern text to voice technology serves an incredibly diverse audience. Publishers use these tools to create audiobooks, educators develop engaging learning materials, businesses generate multilingual customer communications, and individuals with reading difficulties gain independence through audio conversion. The challenge lies not in finding a text to speech solution, but in selecting the right one from an increasingly crowded marketplace of ai voice text to speech platforms.

This comprehensive guide evaluates the leading text to speech voices and platforms available today, examining everything from voice quality and customization options to pricing models and technical integration capabilities. You’ll discover which solutions excel for specific use cases and gain the insights needed to make an informed decision for your unique requirements.

Understanding Text to Speech Technology in 2026

Text to speech technology has undergone a revolutionary transformation, evolving from robotic-sounding outputs to remarkably human-like voices that can convey emotion, intonation, and personality. Modern ai text to speech systems leverage sophisticated algorithms to convert written text into natural-sounding speech, making digital content more accessible and engaging than ever before.

The current landscape of text to voice solutions spans from basic utility applications to advanced conversational AI systems. These tools serve diverse needs, from accessibility support for visually impaired users to content creation for podcasts, audiobooks, and educational materials. Understanding the underlying technology helps users make informed decisions when selecting the right voice synthesis solution for their specific requirements.

How AI Transforms Voice Synthesis

Artificial intelligence has fundamentally changed how text to speech voices are created and delivered. Traditional systems required extensive manual programming and voice actor recordings, while modern AI approaches can generate entirely new voices or clone existing ones with minimal training data. Machine learning algorithms analyze patterns in human speech, including rhythm, stress, and emotional nuances, to produce remarkably authentic vocal outputs.

Contemporary ai voice text to speech platforms can adapt to context, adjusting tone and pacing based on punctuation, sentence structure, and even semantic meaning. For instance, these systems recognize questions and apply appropriate rising intonation, or slow down when processing complex technical terms. This contextual awareness creates a more natural listening experience that closely mimics human speech patterns.

The integration of large language models has further enhanced voice synthesis capabilities. These systems can now understand context beyond individual sentences, maintaining consistent vocal characteristics throughout longer passages while adapting to different content types, whether reading news articles, creative fiction, or technical documentation.

Neural Networks vs Traditional TTS

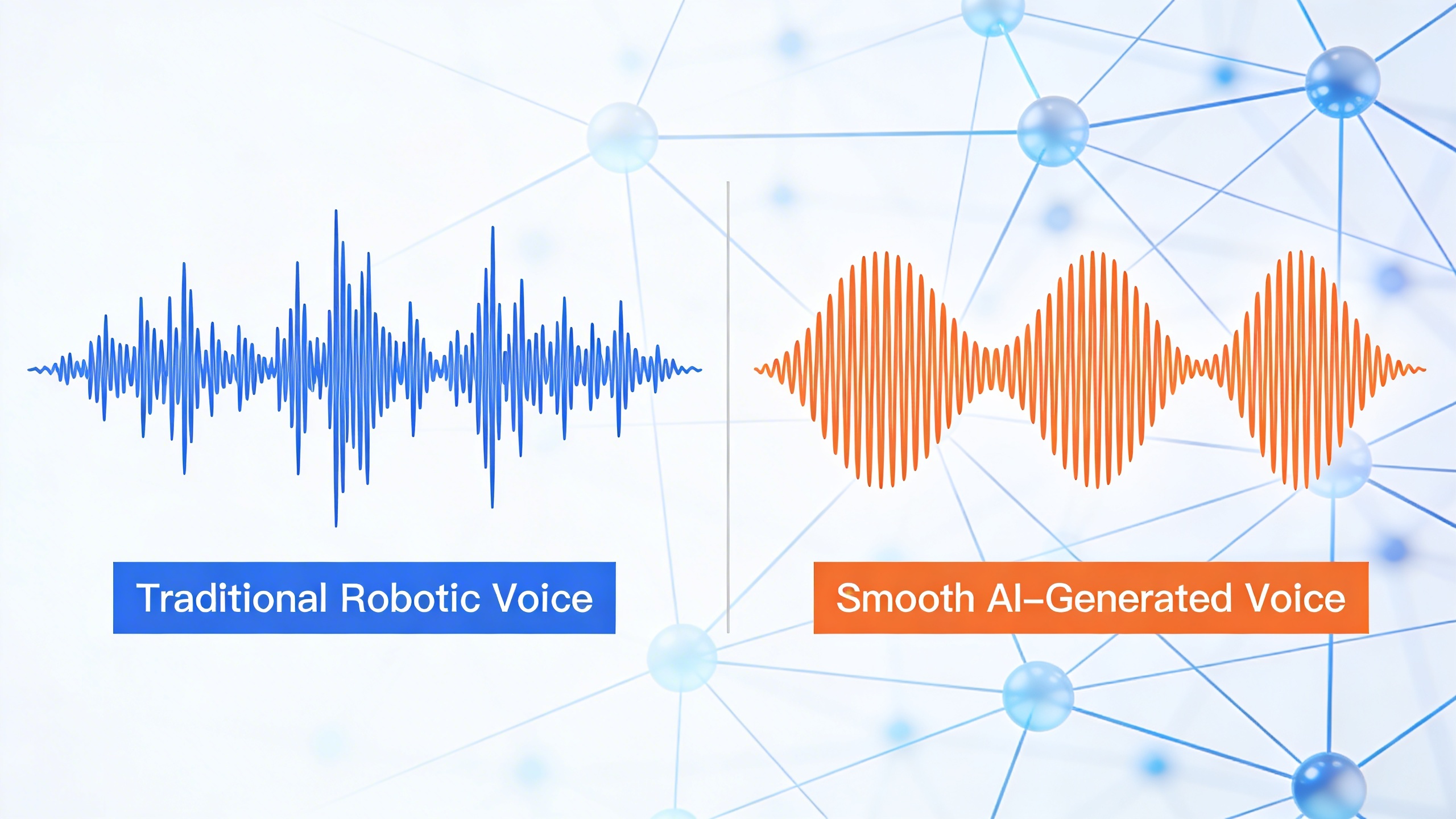

The distinction between neural network-based and traditional concatenative synthesis represents a fundamental shift in text to speech technology. Concatenative systems, the previous standard, worked by recording human speakers pronouncing phonemes, words, or phrases, then stitching these audio segments together to form complete sentences. While functional, this approach often produced choppy, unnatural-sounding speech with noticeable breaks between concatenated segments.

Neural text to speech systems take a completely different approach, using deep learning models trained on vast datasets of human speech. These networks learn the complex relationships between text and audio at a fundamental level, generating speech waveforms directly rather than assembling pre-recorded pieces. The result is smoother, more natural-sounding output with better prosody and emotional expression.

WaveNet and similar neural architectures can model the subtle variations in human speech that make conversations feel natural. They handle co-articulation effects, where the pronunciation of one sound influences adjacent sounds, and can generate appropriate pauses, breathing sounds, and other human-like characteristics that traditional systems struggle to reproduce convincingly.

Voice Quality and Naturalness Factors

Several key factors determine the quality and human-likeness of text to voice output. Prosody encompasses rhythm, stress, and intonation patterns that convey meaning beyond the literal words. High-quality systems adjust these elements based on context, creating speech that sounds conversational rather than mechanical.

Emotional range represents another crucial quality indicator. Advanced ai text to speech platforms can modulate vocal characteristics to express different emotions or speaking styles, from authoritative presentation tones to casual conversational delivery. This capability proves particularly valuable for content creators developing engaging audio experiences.

Voice consistency across different content types and lengths also impacts perceived quality. The best systems maintain stable vocal characteristics while adapting appropriately to various text formats, whether processing social media posts, academic papers, or creative writing. Background noise handling, pronunciation accuracy for specialized terminology, and support for multiple languages further distinguish premium text to speech solutions from basic alternatives.

Audio fidelity, measured in sample rates and bit depth, affects the overall listening experience. Professional-grade systems typically output high-resolution audio suitable for broadcast or commercial applications, while maintaining computational efficiency for real-time applications.

Top Text to Speech AI Platforms Compared

The text to speech landscape offers diverse solutions tailored to different user needs and technical requirements. Understanding which platform delivers the best value for your specific use case requires examining features, pricing structures, and target audiences across three distinct categories of voice technology solutions.

Enterprise-Grade TTS Solutions

Enterprise text to speech platforms prioritize scalability, customization, and integration capabilities. These solutions typically offer robust APIs, extensive voice libraries, and advanced neural network models that produce highly natural speech patterns.

Amazon Polly leads the enterprise space with over 60 realistic voices across 29 languages. The platform excels in cloud-based deployment scenarios, offering both standard and neural text to speech voices with SSML markup support for precise pronunciation control. Pricing follows a pay-per-character model starting at $4 per million characters, making it cost-effective for high-volume applications.

Google Cloud Text-to-Speech provides WaveNet technology that generates remarkably human-like speech patterns. The service supports 40+ languages and offers custom voice creation through AutoML. Enterprise customers benefit from batch processing capabilities and seamless integration with other Google Cloud services. Pricing begins at $4 per million characters for standard voices and $16 per million for WaveNet voices.

Microsoft Azure Cognitive Services Speech offers neural text to voice capabilities with real-time streaming and batch synthesis options. The platform stands out with its custom neural voice feature, allowing organizations to create branded voice experiences. Azure’s strength lies in its comprehensive developer tools and extensive documentation, with pricing starting at $4 per million characters.

| Platform | Voice Count | Languages | Starting Price | Key Strength |

|---|---|---|---|---|

| Amazon Polly | 60+ | 29 | $4/million chars | SSML Support |

| Google Cloud TTS | 40+ | 40+ | $4/million chars | WaveNet Quality |

| Microsoft Azure | 75+ | 45+ | $4/million chars | Custom Voices |

Consumer-Friendly Voice Tools

Consumer-focused ai text to speech platforms emphasize ease of use, affordability, and immediate accessibility. These tools typically feature intuitive interfaces and require minimal technical knowledge while still delivering high-quality voice output.

Murf AI has gained popularity among content creators and educators with its studio-quality ai voice text to speech capabilities. The platform offers over 120 voices in 20+ languages, with an intuitive editor that allows users to adjust pace, emphasis, and pronunciation. Pricing starts at $19 per month for the basic plan, including commercial usage rights.

Speechify targets students and professionals who need to convert written content into audio format quickly. The platform excels in document processing, supporting PDF, Word, and web page conversion. Its mobile apps provide on-the-go text to voice functionality, making it ideal for commuters and busy professionals. Premium plans begin at $11.58 per month.

Natural Reader offers both online and downloadable text to speech solutions with a focus on simplicity and accessibility. The platform provides multiple voice options and supports various document formats. Free users can access basic features, while premium subscriptions starting at $9.99 per month unlock additional voices and commercial usage rights.

For users seeking comprehensive voice and transcription solutions, platforms like Sozai combine text to speech capabilities with AI-powered transcription features, offering a complete voice technology toolkit for professionals who work with both audio input and output.

Specialized Accessibility Platforms

Accessibility-focused text to speech voices platforms serve users with visual impairments, learning disabilities, or reading difficulties. These solutions prioritize clarity, customization options, and integration with assistive technologies.

NVDA (NonVisual Desktop Access) provides free, open-source screen reading capabilities with built-in text to speech functionality. The platform supports multiple speech synthesizers and offers extensive customization options for voice speed, pitch, and pronunciation. Its community-driven development ensures continuous improvements and broad hardware compatibility.

JAWS (Job Access With Speech) remains the gold standard for professional screen reading software. The platform offers advanced navigation features and sophisticated text to speech voices optimized for different content types. While expensive at $1,095 for the professional version, JAWS provides unmatched functionality for workplace accessibility compliance.

Read&Write by Texthelp focuses on literacy support with features designed for students and professionals with dyslexia or other reading challenges. The platform combines text to speech with word prediction, grammar checking, and vocabulary support. Educational pricing starts at $155 per year for individual licenses.

Voice Dream Reader specializes in mobile accessibility, offering premium text to speech voices and advanced document support on iOS and Android devices. The app excels in handling complex documents and provides detailed customization options for reading speed, highlighting, and voice selection. One-time purchase pricing at $14.99 makes it accessible for individual users.

Each category serves distinct user needs, from enterprise developers requiring robust APIs to students seeking affordable accessibility tools. The key to selecting the right ai text to speech platform lies in matching specific requirements with the strengths and limitations of each solution type.

Voice Quality and Customization Features

The quality and customization options of text to speech voices have reached remarkable sophistication in modern AI platforms. Today’s leading text to speech solutions offer extensive voice libraries with natural-sounding options that can adapt to specific use cases, from professional presentations to creative content production. Understanding these customization features helps users select the most appropriate ai text to speech platform for their unique requirements.

Natural Voice Selection Options

Contemporary text to speech platforms provide diverse voice libraries featuring hundreds of distinct voices across different demographics, speaking styles, and vocal characteristics. Premium ai text to speech services typically offer 20-50 high-quality voices per language, with options ranging from conversational and friendly tones to authoritative and professional delivery styles.

Voice quality assessment should focus on several critical factors. Naturalness refers to how closely the synthetic voice resembles human speech patterns, including proper intonation, rhythm, and breathing pauses. Advanced platforms utilize neural networks to generate voices that maintain consistent quality across different text types, from technical documentation to creative storytelling.

Many platforms categorize their text to speech voices by use case scenarios. Business-focused voices excel at delivering presentations and training materials with clear articulation and professional tone. Conversational voices work well for chatbots and virtual assistants, offering warm and approachable delivery. Narrative voices are optimized for audiobooks and long-form content, maintaining listener engagement throughout extended sessions.

Voice preview functionality allows users to test different options before committing to lengthy text conversion projects. Most platforms provide sample audio clips demonstrating how each voice handles various sentence structures, punctuation, and common phrases relevant to specific industries or applications.

Emotion and Tone Controls

Modern ai voice text to speech platforms incorporate sophisticated emotion and tone adjustment capabilities that transform static text into dynamic, expressive audio content. These controls enable users to modify speaking pace, pitch variation, emphasis patterns, and emotional undertones to match their intended message delivery.

Emotion control systems typically offer preset options such as neutral, excited, concerned, confident, or empathetic delivery styles. Advanced platforms provide granular adjustment sliders for parameters like enthusiasm level, speaking speed, and vocal stress patterns. These features prove particularly valuable for marketing content, educational materials, and customer service applications where emotional connection enhances message effectiveness.

Tone customization extends beyond basic emotional settings to include professional adjustments like formality level, urgency indication, and audience-appropriate delivery styles. Business users can configure their text to voice output to match corporate communication standards, while content creators can adapt the same text for different audience segments by adjusting tone parameters.

Some platforms offer context-aware emotion detection that automatically adjusts vocal delivery based on text content analysis. This technology recognizes emotional cues within the written text and applies appropriate vocal modifications without manual intervention, streamlining the production process for large-scale content projects.

Multi-Language and Accent Support

Global text to speech platforms now support 50-100+ languages with multiple accent variations for major languages. This extensive coverage enables businesses to create localized content for international audiences while maintaining consistent brand voice characteristics across different markets.

Accent accuracy represents a crucial quality factor for professional applications. Leading platforms employ native speakers and regional linguistic experts to ensure pronunciation authenticity. For example, English language support typically includes American, British, Australian, Canadian, and Indian accent options, each with multiple voice personalities and speaking styles.

| Language Category | Typical Voice Count | Accent Variations | Quality Level |

|---|---|---|---|

| Major Languages (English, Spanish, French) | 15-25 voices | 3-5 regional accents | Premium neural quality |

| Regional Languages (German, Italian, Japanese) | 8-15 voices | 2-3 regional accents | High neural quality |

| Emerging Markets (Hindi, Arabic, Portuguese) | 5-10 voices | 1-2 regional accents | Standard to high quality |

Cross-language consistency features allow users to maintain similar vocal characteristics when producing content in multiple languages. This capability proves essential for international brands seeking unified audio branding across diverse markets while respecting local pronunciation preferences and cultural communication norms.

Integration and Technical Capabilities

Modern text to speech solutions require robust technical foundations to meet diverse application needs. Whether you’re building a mobile app, integrating voice features into existing software, or processing large volumes of content, understanding the technical capabilities of ai text to speech platforms helps ensure smooth implementation and optimal performance.

API and Developer Tools

Professional text to speech platforms provide comprehensive APIs that enable seamless integration across different programming environments. Leading services offer RESTful APIs with detailed documentation, SDKs for popular programming languages including Python, JavaScript, and Java, and webhook support for asynchronous processing workflows.

Authentication mechanisms typically include API key management and OAuth 2.0 support, ensuring secure access to text to voice services. Rate limiting and usage monitoring tools help developers track consumption and optimize costs. Advanced platforms provide sandbox environments for testing, allowing teams to experiment with different ai voice text to speech configurations before production deployment.

Most enterprise-grade solutions include batch processing capabilities, enabling efficient conversion of large document sets. Error handling and retry mechanisms ensure reliable operation, while detailed logging helps troubleshoot integration issues. Some platforms offer custom model training APIs, allowing organizations to develop specialized text to speech voices tailored to their specific terminology or brand requirements.

File Format and Export Options

Output format flexibility determines how effectively you can integrate generated audio into your existing workflows. Standard audio formats include MP3 for web applications, WAV for high-quality desktop software, and OGG for open-source projects. Professional platforms support multiple bit rates and sample rates, typically ranging from 16 kHz for telephony applications to 48 kHz for broadcast-quality content.

| Format | Best Use Case | File Size | Quality |

|---|---|---|---|

| MP3 | Web streaming, mobile apps | Small | Good |

| WAV | Professional audio, editing | Large | Excellent |

| OGG | Open-source projects | Medium | Very Good |

| FLAC | Lossless archival | Large | Perfect |

Advanced platforms support SSML (Speech Synthesis Markup Language) input, enabling precise control over pronunciation, emphasis, and timing. Some services provide subtitle generation alongside audio output, creating synchronized text tracks for accessibility compliance. Cloud-based solutions often include direct integration with content delivery networks, streamlining distribution of generated audio files.

Real-Time Processing Performance

Latency performance directly impacts user experience in interactive applications. Real-time text to speech processing requires careful consideration of network overhead, processing time, and audio buffer management. Leading platforms achieve sub-second response times for short text segments, making them suitable for chatbots, virtual assistants, and live applications.

Streaming synthesis allows audio playback to begin before complete text processing finishes, reducing perceived latency. This approach proves particularly valuable for longer content where users can start listening while the system processes remaining text. Geographic server distribution helps minimize network latency, with edge computing capabilities bringing processing closer to end users.

Concurrent processing limits vary significantly between platforms, affecting scalability for high-traffic applications. Enterprise solutions typically support hundreds of simultaneous requests, while some services implement intelligent queuing systems to manage peak loads. Performance monitoring tools help identify bottlenecks and optimize processing workflows for consistent user experiences.

Memory usage and computational requirements scale with voice quality and processing complexity. Real-time applications benefit from platforms that offer multiple quality tiers, allowing developers to balance performance against resource consumption based on specific use case requirements.

Use Case Applications and Industry Solutions

Modern text to speech technology serves diverse industries and user groups, transforming how organizations communicate, create content, and ensure accessibility. Understanding these practical applications helps determine which AI text to speech solution best fits specific business needs and user requirements.

Accessibility and Learning Support

Text to speech technology plays a crucial role in creating inclusive digital experiences for users with visual impairments, dyslexia, and other reading challenges. Educational institutions increasingly rely on these tools to support diverse learning styles and comply with accessibility standards like WCAG 2.1 and Section 508.

Students with learning disabilities benefit significantly from ai voice text to speech features that allow them to consume written content audibly. Research shows that combining visual and auditory learning channels improves comprehension rates by up to 40% for students with dyslexia. Modern platforms offer adjustable reading speeds, highlighting capabilities, and voice selection options that cater to individual preferences.

Libraries and educational publishers integrate text to voice functionality into digital textbooks and learning management systems. These implementations enable students to switch seamlessly between reading and listening modes, supporting different learning environments from quiet study sessions to hands-free review while commuting.

Workplace accessibility requirements drive enterprise adoption of text to speech solutions. Companies use these tools to make internal documents, training materials, and communication platforms accessible to employees with visual impairments or reading difficulties, ensuring compliance with disability accommodation laws.

Content Creation and Marketing

Content creators leverage ai text to speech technology to produce engaging multimedia experiences without expensive voice talent or recording equipment. Podcast producers use these tools for intro segments, automated announcements, and multilingual content distribution.

Marketing teams employ text to speech voices for creating promotional videos, social media content, and interactive web experiences. E-learning companies particularly benefit from consistent, professional narration across extensive course catalogs. The ability to update content quickly without re-recording makes these solutions invaluable for rapidly changing industries.

Video game developers integrate dynamic text to voice systems for character dialogue, especially in games with extensive narrative content or user-generated text elements. This approach reduces production costs while maintaining immersive experiences across multiple languages and character types.

Social media platforms increasingly feature text to speech functionality, allowing users to convert written posts into audio content for accessibility and engagement purposes. Content creators use these features to repurpose blog posts, articles, and social media content into podcast-style audio for broader audience reach.

Business Communication Tools

Enterprise communication platforms integrate text to speech capabilities to enhance productivity and accessibility in professional environments. Call centers use these systems for automated customer service responses, hold messages, and multilingual support without maintaining extensive voice recording libraries.

Healthcare organizations employ ai text to speech technology for patient communication systems, medication reminders, and accessibility compliance in electronic health records. These implementations ensure critical information reaches patients effectively, regardless of reading ability or visual capabilities.

Training and development departments utilize text to voice solutions for creating consistent educational content across global teams. This approach eliminates accent barriers and ensures uniform message delivery while reducing the time and cost associated with professional voice recording.

Meeting documentation and note-taking applications benefit from text to speech integration, allowing users to review meeting summaries audibly while multitasking. These features prove particularly valuable for busy executives and team members who need to process information efficiently during commutes or while performing other tasks.

Customer support teams leverage text to speech technology for creating help documentation that serves both visual and auditory learners. This dual-mode approach reduces support ticket volume while improving customer satisfaction through more accessible self-service options.

Pricing Models and Value Analysis

Understanding the cost structure of text to speech solutions is crucial for making informed decisions that align with your budget and requirements. The pricing landscape varies significantly across platforms, with different models offering distinct advantages depending on your usage patterns and organizational needs.

Free vs Premium Feature Comparison

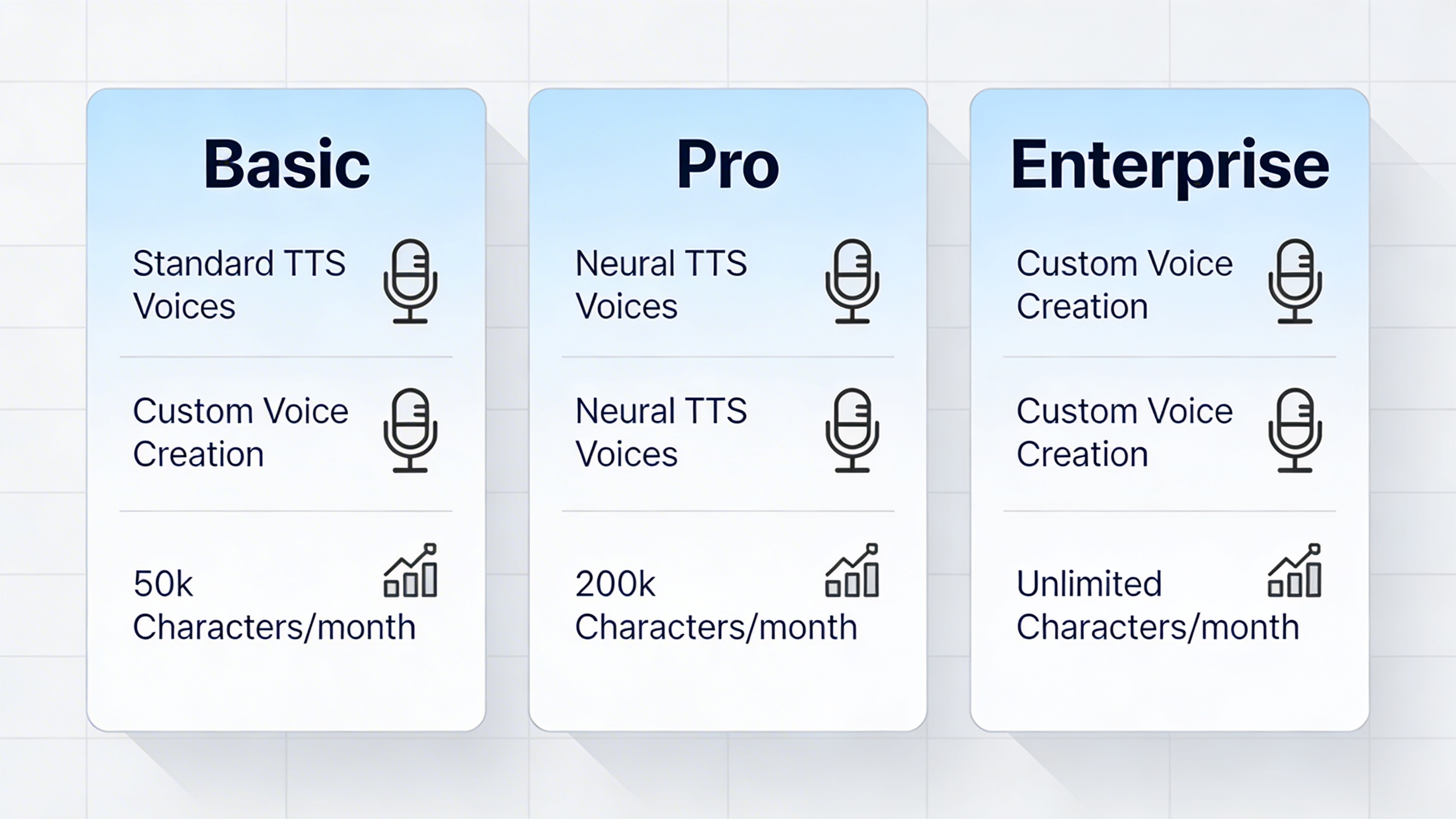

Most text to speech platforms follow a freemium model, providing basic functionality at no cost while reserving advanced features for paid tiers. Free versions typically include a limited selection of standard voices, basic audio formats, and restricted character limits per month. These limitations often range from 5,000 to 20,000 characters monthly, which suffices for personal use or small-scale testing.

Premium tiers unlock the full potential of ai text to speech technology. Subscribers gain access to neural voices that sound remarkably human, extensive voice libraries spanning multiple languages and accents, and advanced customization options including speech speed, pitch, and emphasis controls. Commercial usage rights, API access, and bulk processing capabilities are exclusively available in paid plans, making them essential for business applications.

| Feature Category | Free Tier | Premium Tier |

|---|---|---|

| Voice Quality | Standard synthetic voices | Neural ai voice text to speech |

| Monthly Limits | 5,000-20,000 characters | 500,000+ characters |

| Voice Selection | 10-20 basic voices | 200+ text to speech voices |

| Commercial Use | Personal only | Full commercial rights |

Usage-Based vs Subscription Models

Text to voice platforms typically employ either usage-based pricing or subscription models, each with distinct advantages. Usage-based pricing charges per character or word processed, making it ideal for organizations with irregular or seasonal content needs. This model provides cost predictability for specific projects and eliminates waste from unused allowances.

Subscription models offer fixed monthly or annual fees for predetermined usage limits. These plans work best for consistent, high-volume users who can accurately forecast their text to speech requirements. Many providers offer tiered subscriptions that scale with usage, allowing businesses to upgrade as their needs grow without switching platforms.

Hybrid models combine both approaches, providing a base subscription with overage charges for additional usage. This flexibility appeals to growing organizations that need predictable baseline costs with room for expansion during peak periods.

ROI Considerations for Businesses

Calculating return on investment for ai text to speech technology requires evaluating both direct cost savings and productivity improvements. Organizations replacing human voice talent for routine content can achieve significant savings, particularly for multilingual projects where hiring native speakers becomes expensive.

The time savings factor proves equally valuable. Content creators can generate hours of audio in minutes, while accessibility compliance becomes automated rather than requiring manual intervention. Customer service departments using text to speech for automated responses report reduced call handling times and improved consistency in message delivery.

For content-heavy industries, the scalability benefits justify premium pricing. Educational platforms converting textbooks to audio, news organizations producing podcast versions of articles, and e-learning companies creating multilingual courses find that high-quality text to speech voices eliminate bottlenecks that previously limited content production.

When evaluating total cost of ownership, factor in integration expenses, training requirements, and potential workflow changes. The most cost-effective solution balances feature requirements with actual usage patterns, avoiding both feature gaps that require workarounds and expensive capabilities that remain unused.

Choosing the Right Text to Speech Solution

Selecting the optimal text to speech solution requires a systematic evaluation approach that balances current needs with future scalability. The right AI text to speech platform should seamlessly integrate into your existing workflow while delivering consistent voice quality across all use cases.

Assessment Framework for Selection

Begin your evaluation by establishing clear performance benchmarks for voice quality, processing speed, and accuracy. Test each text to voice platform with your actual content to assess how well different text to speech voices handle industry-specific terminology, pronunciations, and formatting. Consider creating a standardized test script that includes challenging elements like numbers, abbreviations, and technical jargon relevant to your domain.

Evaluate the platform’s customization capabilities by examining voice selection diversity, speech rate controls, and pronunciation adjustment features. The best AI voice text to speech solutions offer granular control over intonation, emphasis, and pause placement. Document how each platform handles different content types, from formal documents to conversational scripts, ensuring the selected solution maintains natural speech patterns across various contexts.

Assess integration complexity by reviewing API documentation, SDK availability, and existing software compatibility. Consider the technical expertise required for implementation and ongoing maintenance. Factor in support quality, response times, and available resources for troubleshooting.

Implementation Best Practices

Start with a pilot deployment focusing on a single use case or department to validate performance and gather user feedback. This approach allows you to identify potential issues and optimize configurations before full-scale rollout. Establish clear success metrics including user adoption rates, content processing volumes, and quality satisfaction scores.

Develop standardized content preparation guidelines to maximize text to speech output quality. This includes formatting best practices, pronunciation guides for specialized terms, and templates for different content types. Train content creators on optimization techniques that improve voice synthesis results.

Create fallback procedures for system maintenance or unexpected outages. Consider implementing multiple text to speech providers for critical applications, ensuring business continuity through redundant voice generation capabilities.

Future-Proofing Your TTS Investment

Evaluate each platform’s development roadmap and commitment to emerging voice technologies. Look for providers investing in neural voice synthesis, real-time processing improvements, and expanded language support. Consider how well the platform adapts to evolving accessibility standards and regulatory requirements.

Assess scalability potential by examining pricing structures for increased usage, additional voice options, and enhanced features. The ideal solution should accommodate growth without requiring complete system replacement. Review the provider’s track record for maintaining backward compatibility during updates and feature additions.

Consider the platform’s approach to data privacy and security, particularly important for organizations handling sensitive content. Evaluate compliance with industry standards and regional regulations that may affect long-term viability.