In a world where 85% of social media videos are watched without sound and 466 million people globally experience disabling hearing loss, closed captions have evolved from an accessibility feature to an essential component of modern video content. These synchronized text overlays do more than transcribe dialogue—they create inclusive experiences that benefit everyone, from commuters watching videos on silent phones to language learners improving their comprehension.

Understanding Closed Captions: More Than Just Subtitles

Closed captions represent a comprehensive text-based representation of all audio content in videos, distinguishable by their ability to be toggled on or off by viewers. Unlike subtitles, which primarily translate or transcribe dialogue for hearing audiences, closed captions assume zero audio access and include crucial non-speech elements like [door creaking], [dramatic music swells], or (whispers) to convey the complete auditory experience.

The term “closed” refers to this optional display feature—captions remain hidden until activated by the viewer, giving individuals complete control over their viewing experience. This flexibility has established closed captions as the gold standard for video accessibility across platforms, from Netflix and YouTube to corporate training videos and educational content.

The Technical Architecture Behind CC Technology

Modern closed caption systems operate through sophisticated synchronization mechanisms that align text with corresponding audio moments down to milliseconds. Caption data exists in various formats—SRT (SubRip Text), WebVTT (Web Video Text Tracks), and SCC (Scenarist Closed Caption)—each containing timestamp information and text content structured for optimal display.

Video players interpret these caption files through built-in decoders that render text overlays dynamically. HTML5 video players utilize the track element to link caption files with video sources, enabling the familiar CC button that millions of users click daily. The rendering engine handles text positioning, line breaks, and display duration while maintaining readability across different screen sizes and resolutions.

Broadcasting Standards and Digital Delivery

Traditional television broadcasts embed caption data in Line 21 of the vertical blanking interval—an ingenious use of otherwise unused signal space. Digital broadcasts and streaming platforms employ more sophisticated methods, encoding captions as separate data packets within video streams or delivering them as sidecar files that download alongside video content.

These technical standards ensure compatibility across devices, from smart TVs and mobile phones to desktop computers and digital signage. The CEA-608 and CEA-708 standards govern broadcast captions in North America, while DVB subtitles serve European markets, each with specific formatting capabilities and display requirements.

Legal Requirements and ADA Compliance

The Americans with Disabilities Act (ADA) and Federal Communications Commission (FCC) regulations mandate closed captions for most public-facing video content, making compliance not just ethical but legally required. Title III of the ADA requires places of public accommodation to ensure effective communication with people who have hearing, vision, or speech disabilities.

Compliance Standards and Requirements

The FCC’s closed captioning rules apply to all television programming, with specific quality standards for accuracy, synchronicity, program completeness, and placement. Online video content that was previously broadcast on television must include captions when distributed online, extending traditional media requirements to digital platforms.

Educational institutions face additional requirements under Section 504 and Section 508 of the Rehabilitation Act, mandating captioned video content for students with disabilities. The Web Content Accessibility Guidelines (WCAG) 2.1 Level AA, widely adopted as the international standard, requires captions for all prerecorded audio content in synchronized media.

The Business Case: Beyond Compliance

While legal compliance drives initial caption adoption, the business benefits extend far beyond avoiding lawsuits. Studies show that captioned videos on social media receive 80% more views and 12% better engagement than uncaptioned content. Search engines index caption text, improving video SEO and discoverability—a crucial advantage in content-saturated digital markets.

Engagement and Retention Benefits

Captions increase video completion rates by 80% on average, as viewers can follow content in sound-sensitive environments like offices, public transport, or late-night browsing. This silent viewing behavior dominates mobile consumption, where autoplay without sound has become the default user experience across platforms.

International audiences benefit enormously from captions, even when content is in their native language. Accents, technical terminology, and rapid speech become more comprehensible with text support. Educational research demonstrates that students using captions show improved vocabulary acquisition, better content retention, and higher test scores compared to audio-only learning.

Creating Professional-Quality Closed Captions

Effective closed captions require more than accurate transcription—they demand careful attention to timing, formatting, and readability. Professional captioners follow established guidelines that balance information density with viewing comfort, ensuring captions enhance rather than distract from video content.

Timing and Synchronization Best Practices

Captions should appear on screen for sufficient time to be read comfortably, typically following the two-second minimum for short phrases. Reading speed guidelines recommend 160-180 words per minute for general audiences, though educational or technical content may require slower rates. Caption breaks should align with natural speech patterns, avoiding mid-word or mid-phrase splits that disrupt comprehension.

Speaker identification maintains clarity in multi-person dialogues, using consistent formatting like “SARAH:” or “[Sarah]” before dialogue. Sound effect descriptions should be concise yet descriptive, using established conventions like [applause], [phone ringing], or [ominous music] to convey auditory atmosphere without overwhelming the visual space.

Advanced Caption Features and Formatting

Modern caption formats support sophisticated styling options that enhance viewer experience. Color coding differentiates speakers without explicit labels, while positioning variations indicate off-screen dialogue or narrative voice-overs. These advanced features transform basic transcription into immersive reading experiences that parallel the richness of audio tracks.

Multi-Language Support and Localization

Global content distribution requires caption localization beyond simple translation. Cultural references, idioms, and humor need adaptation for international audiences while maintaining timing synchronization with original audio. Professional localization considers reading speeds in different languages—German text typically requires 30% more space than English, affecting caption display duration.

Industry-Specific Caption Requirements

Different industries impose unique captioning challenges and standards. Live sports broadcasting requires real-time captioning with minimal delay, often using stenographers who type at 200+ words per minute using specialized keyboards. News broadcasts demand accuracy for proper names, locations, and breaking developments while maintaining synchronization with rapidly changing visuals.

Entertainment content balances dialogue transcription with atmospheric elements—captioning comedic timing, musical lyrics, and dramatic pauses that contribute to storytelling. Documentary films require careful attention to speaker identification when multiple interviewees appear, often including location or title descriptions for context.

Corporate training videos emphasize terminology accuracy and procedural clarity, with captions serving as searchable reference materials for employees. Healthcare and safety videos require precise captioning of warnings, dosage instructions, and emergency procedures where errors could have serious consequences.

The Future of Closed Caption Technology

Artificial intelligence is revolutionizing caption creation through automatic speech recognition (ASR) systems that achieve near-human accuracy. Machine learning models trained on diverse accents, languages, and speaking styles generate preliminary captions that professional editors refine, dramatically reducing production time and costs.

Emerging Technologies and Innovations

Augmented reality (AR) captions promise new possibilities for live events, projecting personalized text overlays through smart glasses or mobile devices. These systems could offer individual language preferences, reading speeds, and formatting options without affecting other viewers’ experiences.

Emotion recognition technology adds expressive dimensions to captions, conveying tone through formatting variations—italics for sarcasm, bold for emphasis, or color gradients for emotional intensity. These enhancements help convey subtleties that traditional captions might miss, particularly important for dramatic content or nuanced communication.

Implementing Effective Caption Workflows

Successful caption implementation requires systematic workflows that balance automation with human oversight. Organizations should establish clear responsibilities for caption creation, review, and publication, ensuring consistent quality across all video content.

Budget considerations influence workflow decisions—automatic captioning costs pennies per minute but requires editing, while professional human captioning costs more but delivers broadcast-ready quality. Hybrid approaches leverage ASR for initial drafts with human review for accuracy, optimizing both cost and quality.

Quality Assurance and Testing

Caption quality assurance involves multiple review stages checking accuracy, timing, formatting, and technical compliance. Automated tools can verify caption file syntax and timing consistency, while human reviewers ensure contextual accuracy and appropriate non-speech descriptions.

Testing across platforms ensures captions display correctly on different devices and players. Mobile testing is particularly crucial, as smaller screens and varied orientations can affect caption readability. User feedback mechanisms help identify issues and improve caption quality over time.

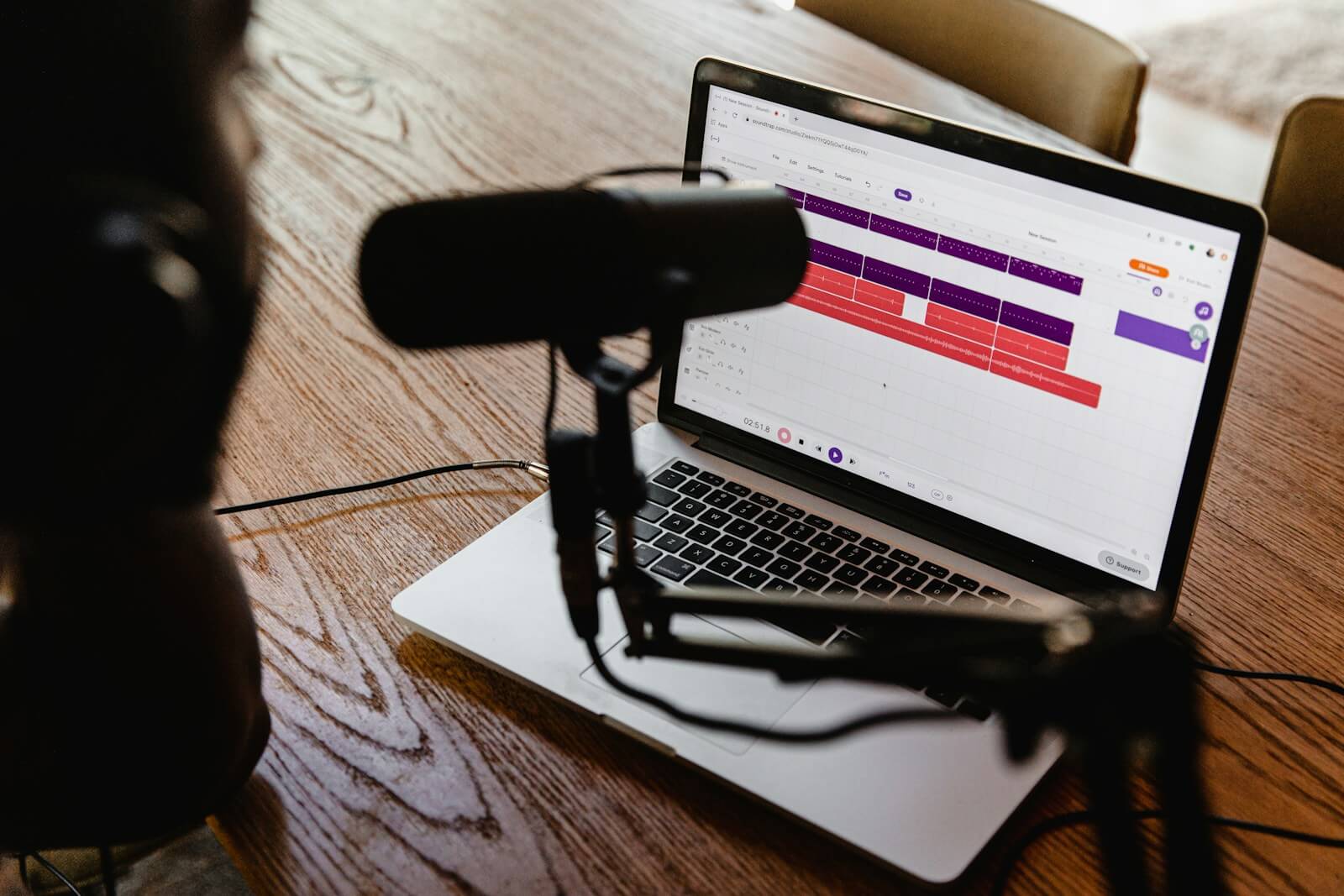

How Söz AI Transforms Closed Caption Creation

Söz AI revolutionizes the closed caption workflow by combining cutting-edge AI transcription with intelligent formatting capabilities. The platform automatically generates time-synchronized captions from any audio or video source, dramatically reducing the traditional timeline from days to minutes.

The system’s advanced speaker diarization technology automatically identifies different voices in conversations, properly attributing dialogue without manual intervention. Smart punctuation and paragraph breaks create natural reading flow, while customizable export options produce caption files compatible with all major video platforms and broadcast standards.

Beyond basic transcription, Söz AI’s integration with AssemblyAI and LeMUR technologies enables automatic content analysis, identifying key moments for chapter markers and generating descriptive summaries that enhance navigation for all users. The platform’s accuracy exceeds industry standards, requiring minimal editing even for technical or specialized content.

Ready to streamline your closed caption workflow? Try Söz AI free today and discover how AI-powered transcription can make your video content accessible to everyone in minutes.